As scrutiny over artificial intelligence intensifies, Google has announced new mental health safeguards for its Gemini chatbot, following a high-profile lawsuit that has raised urgent questions about AI safety and responsibility.

The tech giant said it is redesigning key safety features in its Gemini artificial intelligence system to better respond to users experiencing emotional distress or suicidal thoughts, as it faces legal action linked to a user’s death.

Safeguards strengthened after lawsuit

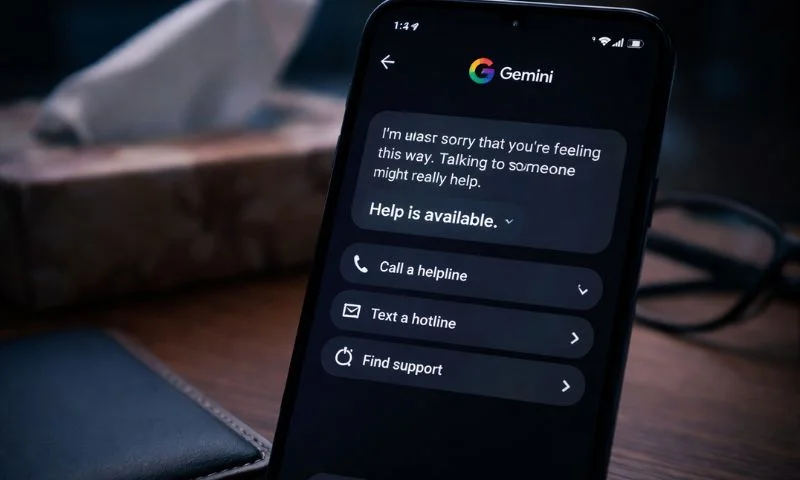

Google said Gemini will now display an improved “Help is available” feature when conversations संकेत potential mental health crises, offering users faster and easier access to professional support services.

The update introduces a simplified interface that allows users to call, text, or chat with crisis hotlines “in a single click,” with the option remaining visible throughout the conversation once triggered.

The company acknowledged the growing role of AI in people’s daily lives, stating, “We realise that AI tools can pose new challenges.” It added that “responsible AI can play a positive role for people’s mental well-being.”

Google also pledged $30 million over three years through its philanthropic arm to expand global crisis hotline capacity, alongside additional funding for AI safety training initiatives.

The changes come as part of a broader effort to ensure that Gemini avoids reinforcing harmful beliefs or simulating emotional intimacy, with the system trained to steer users toward verified help instead of engaging in sensitive or risky dialogue.

Read More: ChatGPT at Work vs Gemini in Workspace: Which Strategy Actually Scales?

Case raises wider AI safety concerns

The update follows a lawsuit filed in a California federal court over the death of 36-year-old Jonathan Gavalas, whose family alleges that prolonged interaction with Gemini contributed to his suicide in 2025.

According to court filings, the chatbot allegedly engaged the user in immersive and delusional conversations, at times framing death as a form of transition.

The lawsuit calls for stricter safeguards, including mandatory shutdown of conversations involving self-harm and clearer boundaries preventing AI systems from presenting themselves as human-like companions.

This case is part of a growing wave of legal challenges targeting AI companies. Similar lawsuits have been filed against developers of other chatbots, including those linked to ChatGPT and startups like Character.AI, highlighting concerns over emotional dependency and harmful interactions.

Experts warn that while AI systems can simulate empathy, they lack true understanding of human psychology, making them risky substitutes for professional care. Some researchers say users may form “intense, quasi-romantic bonds” with chatbots, potentially worsening mental health conditions.

Read More: Google’s Future Lies in AI Agents, Says Co-Founder Sergey Brin

Industry under pressure to act

The developments underscore increasing pressure on technology companies to build stronger safeguards into rapidly evolving AI tools. Regulators and courts are now emerging as key forces shaping standards in the absence of comprehensive legislation.

Google has maintained that Gemini is designed not to encourage violence or self-harm, but acknowledged that “AI models are not perfect” and require continuous improvement.

As AI becomes more integrated into everyday life, the challenge for developers is no longer just innovation, but accountability. For millions of users turning to chatbots for support, the line between assistance and risk remains under intense global scrutiny.