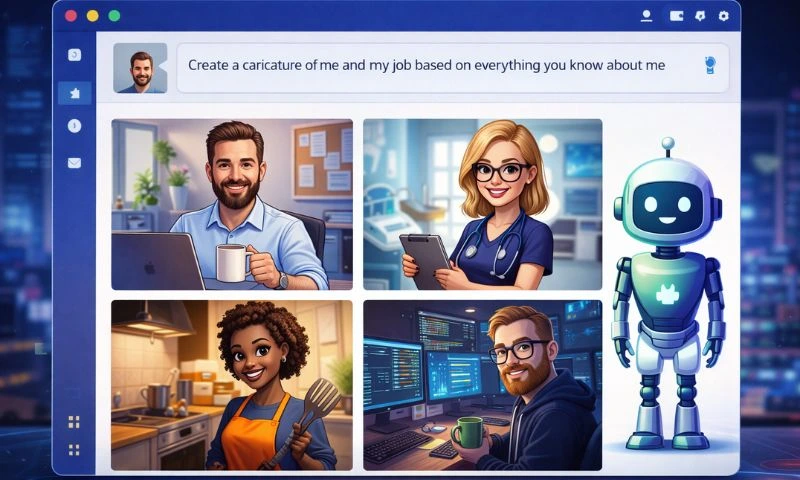

A new viral AI trend has taken social media by storm as people use ChatGPT and similar tools to generate personalized AI caricatures of themselves, blending selfies with details about their job roles, interests and personality. While the trend is fun and creative, technology experts warn it carries real privacy and security risks that users must not ignore.

The trend started with a simple prompt that many users are now repeating on platforms like Instagram, X, WhatsApp and LinkedIn: “Create a caricature of me and my job based on everything you know about me.” Based on this, ChatGPT and other AI systems generate cartoon-style portraits that include professional props such as laptops, coffee mugs or tools of the trade, making the caricatures feel personal and relevant.

On the surface, these caricatures seem harmless. Users enjoy sharing them with friends and colleagues because the images often reflect their work persona and hobbies in imaginative ways. Many people see the trend as a playful use of the latest generative AI capabilities that can turn simple selfies into stylized digital portraits in seconds.

However, cybersecurity specialists have raised alarms about what this trend means for digital privacy and data protection. Unlike previous selfie filters or avatars that simply transformed facial features, the ChatGPT AI caricatures often require users to provide detailed inputs, including photographs and contextual descriptions. This information, when combined with an AI’s memory of previous chats and usage patterns, can create a rich profile of a person’s life.

Experts warn that uploading high-resolution photos, personal descriptors and job details gives AI platforms access to data far beyond what users may realize. Once images and associated information are processed by AI systems, they may be stored, reused or shared with affiliates under platform policies that are complex and often broad. In some regions, users can choose to disable data sharing features, but many remain unaware of these settings.

Security researchers also worry that repeated participation in such trends could normalize oversharing information with AI tools, making users more likely to provide sensitive or professionally identifying data without considering long-term consequences. In extreme cases, personal details obtained from avatars and AI interactions might be exploited for scams, identity theft or even deepfake content.

To protect themselves, specialists urge people to avoid uploading real photos, keep prompts generic, and review privacy policies before engaging with viral AI trends. They also recommend disabling chat memory and not sharing content users wouldn’t post publicly.

The ChatGPT caricature phenomenon highlights the growing tension between creativity and privacy in the age of generative AI. While this trend offers a fun way to express personality online, it also serves as a reminder that digital footprints can be deeper and longer-lasting than many users expect.